📖 Bridging Generation and Training

A Systematic Review of Quality Issues in LLMs for Code

Large language models (LLMs) frequently generate defective outputs in code generation tasks, ranging from logical bugs to security vulnerabilities. While these generation failures are often treated as model-level limitations, empirical evidence increasingly traces their root causes to imperfections within the training corpora.

📢 News

- [2026-04] 🚀 The

From-Data-to-Coderepository is officially launched.

114

Primary Studies Reviewed

9

Quality Dimensions

18

Propagation Mechanisms

📖 Abstract

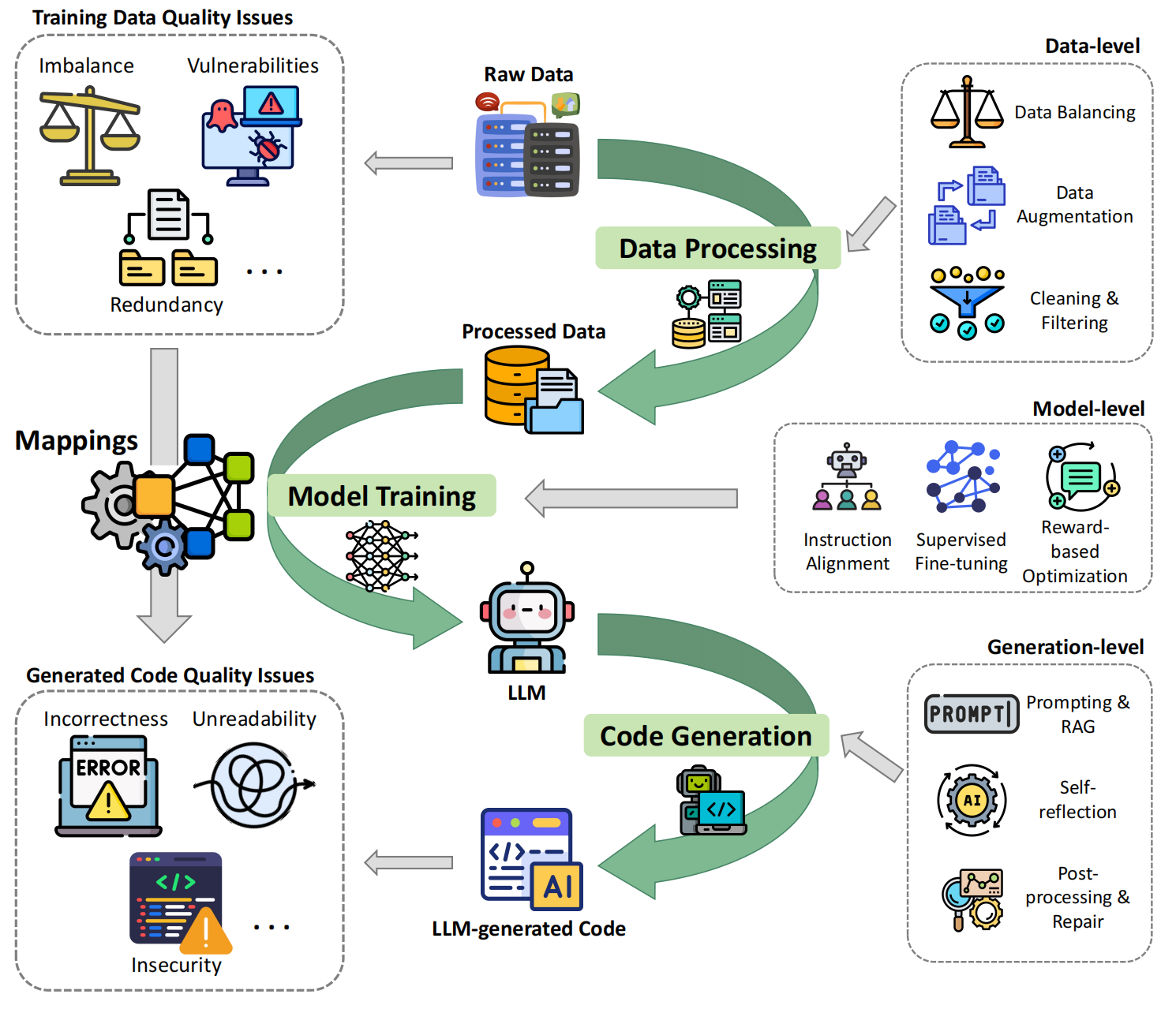

This paper presents a systematic literature review of 114 primary studies to investigate how training data quality issues propagate into code generation. We establish a unified taxonomy that categorizes generated code quality issues across nine dimensions and training data quality issues into code and non-code attributes. Based on this taxonomy, we formalize a causal framework detailing 18 typical propagation mapping mechanisms. Furthermore, we synthesize state-of-the-art detection and mitigation techniques across the data, model, and generation lifecycles.

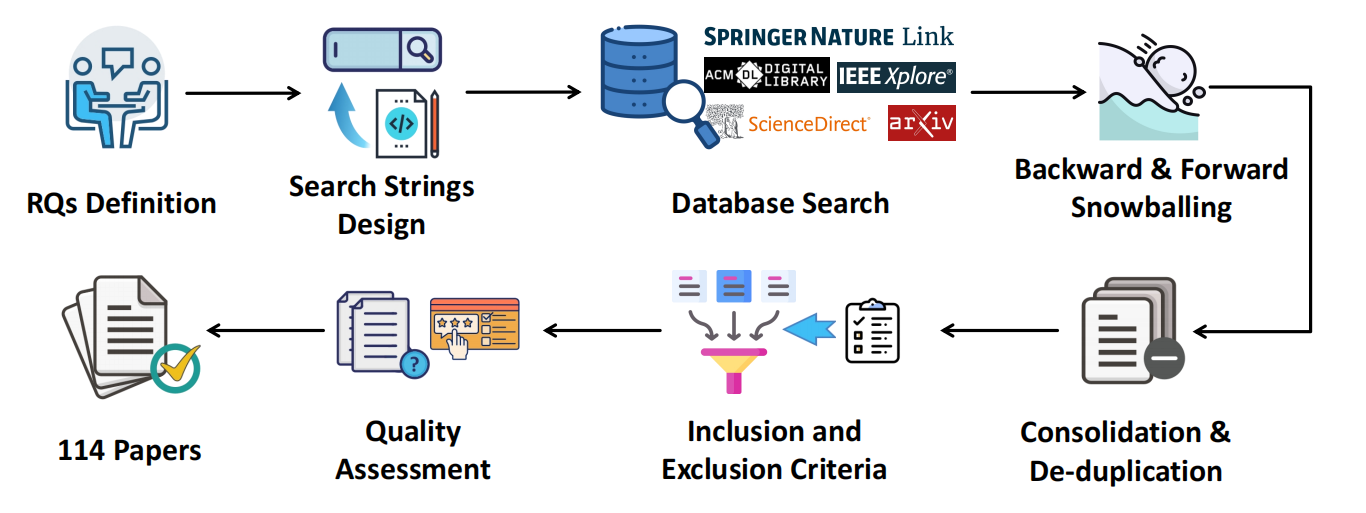

Fig. 1. Overview of the paper collection and filtering process.

Fig. 2. Conceptual Framework of Quality Issues and Mitigation in the LLM Lifecycle.

🤝 Contribution

We warmly welcome contributions from the community! If you have new research or have discovered missing classic papers, please follow these steps:

- Fork this repository.

- Add your paper to the corresponding RQ section.

- Submit a Pull Request.